Workshop Overview:

In several areas of astronomy the sensitivity of our searches for some types of signals is computationally limited. That is, either faster computers or better algorithms would lead to more discoveries in the same datasets. This is certainly true for many cases in gravitational-wave data analysis. Improved algorithms are also critical in the rapidly developing field of time domain astronomy, where transient signals from a variety of interesting astrophysical phenomena, ranging from the Solar System to cosmology and extreme relativistic objects, must be discerned in massive data streams.

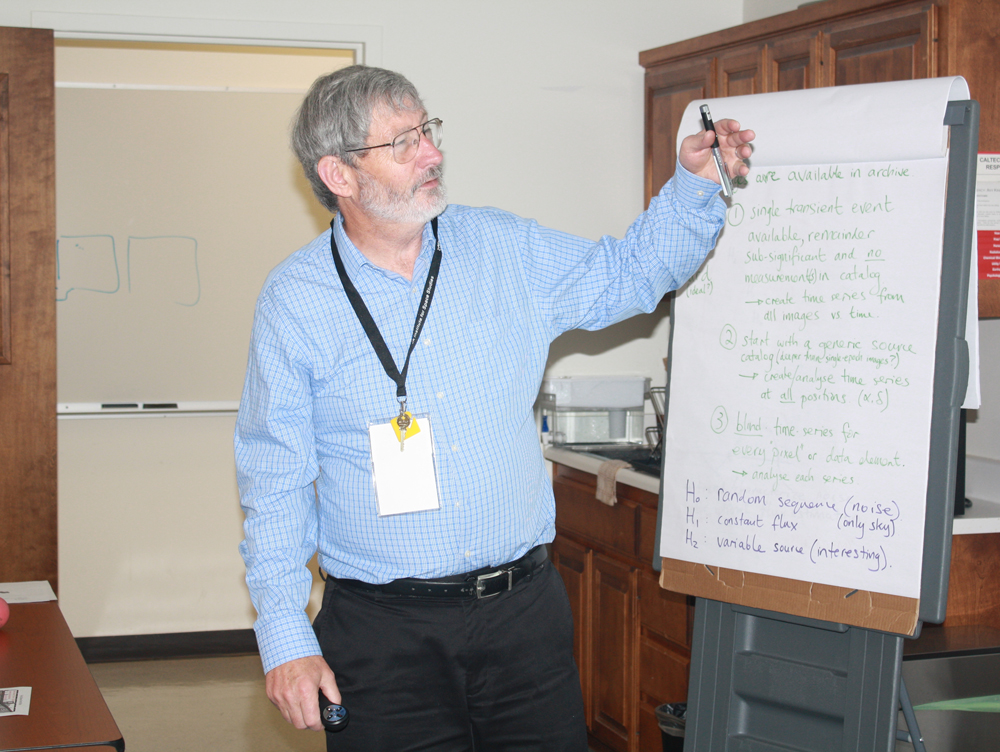

We plan to limit our investigations to time series, which could be light curves of sources detected in multiple images, or output from a detector like LIGO. The challenge has two related sides:

- detection of faint and/or transient signals, and

- their classification/characterization, which informs the detection process through a design of optimal detection algorithms,and is essential for the follow-up prioritization of the detected signals and events.

Our technical goal is to develop a few realistic, benchmark problems on which the methods can be compared, keeping in mind computational resources and available architectures. In practice, we plan to define 2 or 3 specific, timely, astrophysically motivated challenges to guide our thinking and serve as methodological testbeds.

Our aim is to compile a practical guide to the best available methods of attacking these problems. Our work should lead to an improved understanding and useful rules of thumb regarding the advantages and scaling properties of different methods, which can be carried over to other data analysis challenges.